Fakten

| Beginn | 01.07.2013 |

| Beteiligte Partner |

|

| Beteiligte Personen |

|

Abstract

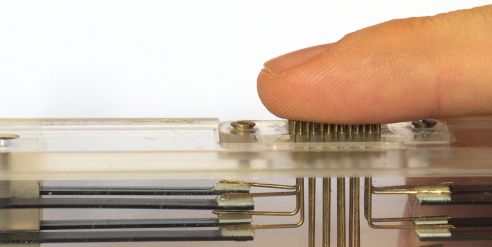

The quality of today’s visual and acoustic presentation requires little improvement even on home- or office computers. Haptic interaction is usually limited to using a keyboard and mouse. In research and development as well as design applications however force feedback devices are already in use. These devices aren't suitable for general applications though. It is desirable to replicate the impression of touching an objects surface in a simple manner, without using tools that limit the possible range of perception. There are currently only a few selected prototypes that recreate single stimuli of tactile perception, and no devices that offer an integrated representation across several channels.

This project aims to use the core capabilities of the partnered institutes for the development of a tactile display. This display will address more than one channel of tactile perception through different stimuli. Apart from the micro/precision mechanics, the necessary controls are subjects of research in this project. Identifying equivalence classes, similar to established RGB based color display techniques, and constructing a sensor capable of measuring stimuli from as many of these classes as possible is subject of this project too.

Preceding Research

In the context of the EU funded HAPTEX-Project (HAPtic sensing of virtual TEXtiles) in 2007 a visual haptic tactile VR system has been developed. This system allowed the creation of haptic/tactile impressions of a deformable textile fabric in realtime.

Regarding the simultaneous visual-haptic-tactile perception the developed system remains state of the art. During this project the Welfenlab was responsible for developing the model and software for the haptic/tactile interaction with the textile, taking into account different physical properties of the textiles materials.